Altman Tells OpenAI Staff Military Decisions Rest With Government

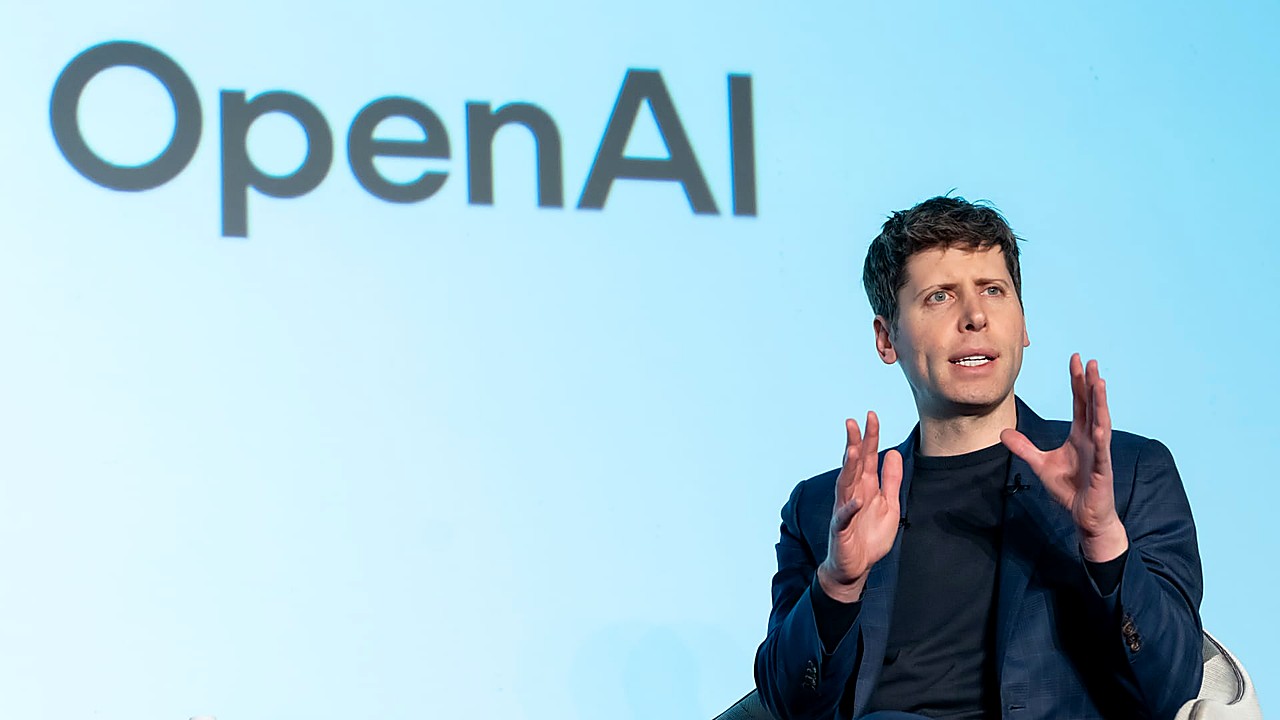

Sam Altman told OpenAI employees that “operational decisions” made by the military are ultimately the responsibility of the U.S. government, according to CNBC.

Altman made the remarks in an internal setting with staff, addressing how OpenAI’s technology could be used in defense contexts. The comments drew a line between OpenAI providing tools and the government making decisions about how those tools are used in military operations.

The discussion comes as OpenAI expands its work with the U.S. government. OpenAI has reached a deal to deploy its AI models on a U.S. Department of War classified network, according to AOL.com. The agreement places OpenAI’s technology closer to sensitive national security environments and raises the stakes around governance, oversight, and accountability.

Altman’s message to staff underscores a core tension facing major AI developers as their products move into higher-risk settings. AI systems can be used for analysis, planning, and other tasks that may influence real-world outcomes. When that work intersects with defense and classified systems, questions intensify over who is responsible for how tools are used, what safeguards exist, and how companies enforce internal policies.

The issue has also prompted scrutiny outside the company. The BBC has reported that OpenAI changed its deal with the U.S. military after backlash, highlighting that partnerships involving defense applications can create reputational and ethical pressure even when details are limited by security restrictions.

For OpenAI, the debate is not just about a single contract. It goes to the company’s evolving role as a provider of powerful general-purpose AI models used by businesses, consumers, and governments. As those models become more capable and more widely embedded in institutional decision-making, internal debates about acceptable use are likely to become more frequent and more consequential.

What happens next will depend on the scope of OpenAI’s government deployments and how the company communicates its guardrails to employees and the public. Any further announcements about government partnerships, security arrangements, or product limitations could shape how OpenAI’s workforce and outside observers judge the company’s approach to defense-related work.

Altman’s statement points to a clear dividing line he wants employees to understand: OpenAI may supply the tools, but the government is responsible for military operational decisions made with them.