Sam Altman Apologizes For Failure To Flag Canadian Mass Shooter

OpenAI CEO Sam Altman has apologized to a community in Canada after the company failed to report conversations between a mass shooter and its AI chatbot to law enforcement, according to multiple published reports.

The apology was directed to the community of Tumbler Ridge, British Columbia, as reported by CBC and echoed by other outlets. Reports said Altman expressed regret that OpenAI did not flag the shooter’s account and did not warn police before the attack.

The reports describe the case as involving a mass shooting tied to a school, and they say the shooter had used ChatGPT before carrying out the violence. CP24 and CBS News characterized Altman’s message as “deeply sorry” and framed the apology around the company’s failure to alert authorities to the account linked to the shooter’s use of the chatbot.

CNN reported that Altman apologized to the Canadian community after OpenAI did not flag the shooter’s conversations with its AI system. Al Jazeera and other outlets similarly reported that Altman issued an apology over the failure to report the shooter’s activity.

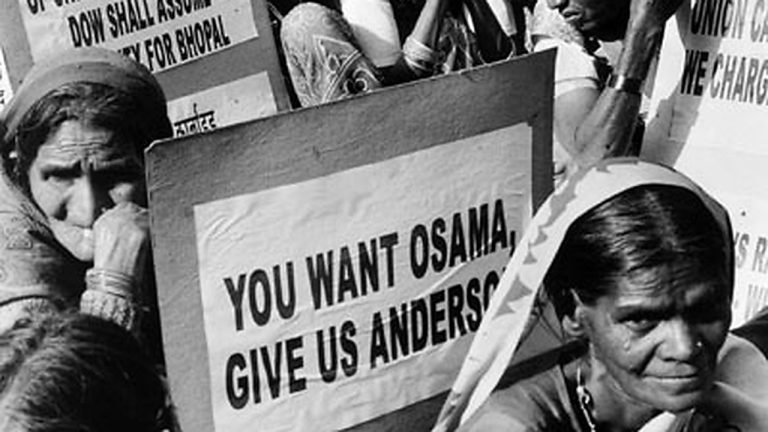

The development matters because it puts renewed attention on how AI companies handle safety signals, potential threats, and escalation to law enforcement. The reports indicate the issue is not only what a user asked a chatbot, but whether and how a company should identify credible risks and act quickly when conversations may suggest imminent harm.

It also underscores the high stakes of trust for communities affected by violence. An apology from the CEO of one of the most prominent AI firms signals that the company views the failure as serious and that the consequences extend beyond product policy disputes to real-world harm.

At the same time, the reports point to a difficult intersection of safety practices, user privacy, and operational decision-making. When and how to report potential threats can involve internal rules, judgments about credibility, and coordination with authorities—areas that have become central as AI tools are widely used.

What happens next will likely include scrutiny of OpenAI’s internal safety processes and any changes the company may make to how it reviews and escalates certain conversations. The reports do not provide details of specific policy changes, but they frame Altman’s apology as an acknowledgment that the company did not do enough in this case.

The case may also draw responses from Canadian officials, local leaders, and the broader technology industry, as governments and companies face questions about standards for reporting potential threats that surface through online platforms and AI services.

Altman’s apology, as reported, lands as a public admission that even leading AI firms can miss warning signs—with consequences that extend far beyond the screen.