Ontario Audit Finds AI Scribes Put Wrong Facts In Medical Notes

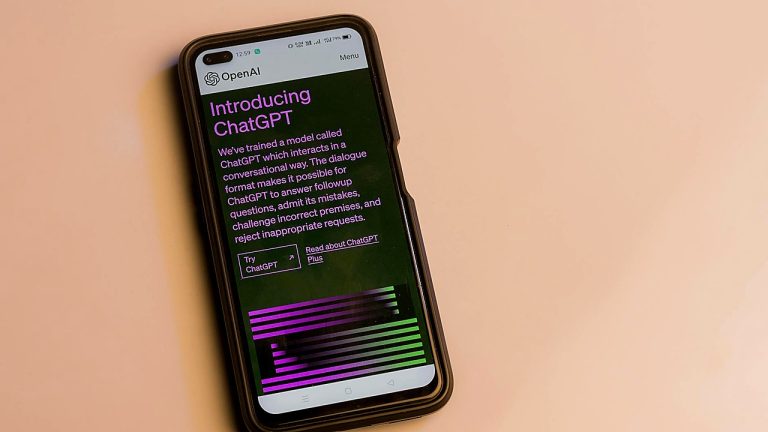

Ontario’s auditor has raised concerns about the accuracy of AI “note takers” being used in doctors’ offices, finding the tools routinely get basic facts wrong in clinical documentation.

The finding focuses on software designed to listen to patient visits and generate medical notes for clinicians. Auditors said the AI systems produced errors that included missed details and incorrect information, undermining the reliability of records that can guide diagnoses, prescriptions, referrals, and follow-up care.

The review centers on Ontario, where the use of automated documentation tools has been expanding as health providers look for ways to reduce administrative burden and speed up charting. Auditors examined outputs from the AI note-taking systems and reported repeated problems with factual accuracy in the generated notes.

The concerns are not about minor stylistic issues, but about mistakes that can change the meaning of a clinical encounter. In medical settings, documentation is used to track symptoms and histories, coordinate care between providers, and create a record that patients and clinicians may rely on later. Even small errors can compound when notes are copied forward or used as the basis for future decisions.

The auditors’ findings matter because clinical notes are part of the patient’s health record. Inaccurate documentation can complicate continuity of care, create confusion during referrals, and increase the chance of improper treatment. It can also lead to disputes over what was said or agreed to during a visit.

The report also lands as health systems increasingly adopt AI tools without uniform standards for validation, monitoring, and accountability. When technology is introduced into clinical workflows, the central question is not only whether it saves time, but whether it preserves the accuracy and completeness required for safe care.

What happens next will likely involve tighter scrutiny of these tools in everyday practice. The auditors’ findings put pressure on clinics and health organizations using AI documentation to verify notes before they are placed into medical records and to evaluate whether current oversight is sufficient.

The development may also prompt discussions about procurement and governance: which products can be used, under what conditions, and with what performance requirements. Health providers using AI note takers may face renewed expectations to show how errors are detected and corrected, and how clinicians remain responsible for what is ultimately recorded.

For patients, the findings underscore the importance of reviewing their medical information when possible and asking for corrections if something is wrong. For clinicians, the report reinforces that automation does not remove the need for careful review—especially when the record can influence future care.

Ontario’s auditors have delivered a clear warning: in medicine, tools that routinely miss basic facts cannot be treated as reliable record keepers.